Vision-Guided Robot Hearing

Résumé

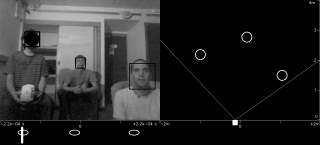

Natural human-robot interaction (HRI) in complex and unpredictable environments is important with many potential applicatons. While vision-based HRI has been thoroughly investigated, robot hearing and audio-based HRI are emerging research topics in robotics. In typical real-world scenarios, humans are at some distance from the robot and hence the sensory (microphone) data are strongly impaired by background noise, reverberations and competing auditory sources. In this context, the detection and localization of speakers plays a key role that enables several tasks, such as improving the signal-to-noise ratio for speech recognition, speaker recognition, speaker tracking, etc. In this paper we address the problem of how to detect and localize people that are both seen and heard. We introduce a hybrid deterministic/probabilistic model. The deterministic component allows us to map 3D visual data onto an 1D auditory space. The probabilistic component of the model enables the visual features to guide the grouping of the auditory features in order to form audiovisual (AV) objects. The proposed model and the associated algorithms are implemented in real-time (17 FPS) using a stereoscopic camera pair and two microphones embedded into the head of the humanoid robot NAO. We perform experiments with (i)~synthetic data, (ii)~publicly available data gathered with an audiovisual robotic head, and (iii)~data acquired using the NAO robot. The results validate the approach and are an encouragement to investigate how vision and hearing could be further combined for robust HRI.

Fichier principal

main.pdf (2.03 Mo)

Télécharger le fichier

main.pdf (2.03 Mo)

Télécharger le fichier

frame_0000000315.jpg (118.74 Ko)

Télécharger le fichier

frame_0000000315.jpg (118.74 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Loading...