Local Shape Editing at the Compositing Stage

Résumé

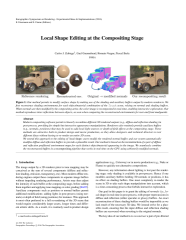

Modern compositing software permit to linearly recombine different 3D rendered outputs (e.g., diffuse and reflection shading) in post-process, providing for simple but interactive appearance manipulations. Renderers also routinely provide auxiliary buffers (e.g., normals, positions) that may be used to add local light sources or depth-of-field effects at the compositing stage. These methods are attractive both in product design and movie production, as they allow designers and technical directors to test different ideas without having to re-render an entire 3D scene.

We extend this approach to the editing of local shape: users modify the rendered normal buffer, and our system automatically modifies diffuse and reflection buffers to provide a plausible result. Our method is based on the reconstruction of a pair of diffuse and reflection prefiltered environment maps for each distinct object/material appearing in the image. We seamlessly combine the reconstructed buffers in a recompositing pipeline that works in real-time on the GPU using arbitrarily modified normals.

We extend this approach to the editing of local shape: users modify the rendered normal buffer, and our system automatically modifies diffuse and reflection buffers to provide a plausible result. Our method is based on the reconstruction of a pair of diffuse and reflection prefiltered environment maps for each distinct object/material appearing in the image. We seamlessly combine the reconstructed buffers in a recompositing pipeline that works in real-time on the GPU using arbitrarily modified normals.

Fichier principal

Zubiaga - Local Shape Editing at the Compositing Stage.pdf (5.76 Mo)

Télécharger le fichier

Zubiaga - Local Shape Editing at the Compositing Stage.pdf (5.76 Mo)

Télécharger le fichier

RepImg.jpg (1.51 Mo)

Télécharger le fichier

Zubiaga - Local Shape Editing at the Compositing Stage - PRESENTATION.pdf (3.78 Mo)

Télécharger le fichier

Zubiaga - Local Shape Editing at the Compositing Stage - VIDEO.mp4 (53.25 Mo)

Télécharger le fichier

RepImg.jpg (1.51 Mo)

Télécharger le fichier

Zubiaga - Local Shape Editing at the Compositing Stage - PRESENTATION.pdf (3.78 Mo)

Télécharger le fichier

Zubiaga - Local Shape Editing at the Compositing Stage - VIDEO.mp4 (53.25 Mo)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Origine : Fichiers produits par l'(les) auteur(s)

Origine : Fichiers produits par l'(les) auteur(s)

Origine : Fichiers produits par l'(les) auteur(s)

Origine : Fichiers produits par l'(les) auteur(s)

Loading...