Exploiting the Complementarity of Audio and Visual Data in Multi-Speaker Tracking

Résumé

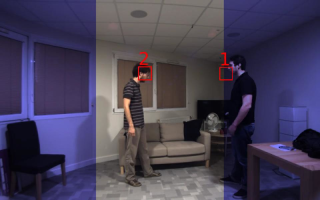

Multi-speaker tracking is a central problem in human-robot interaction. In this context, exploiting auditory and visual information is gratifying and challenging at the same time. Gratifying because the complementary nature of auditory and visual information allows us to be more robust against noise and outliers than unimodal approaches. Challenging because how to properly fuse auditory and visual information for multi-speaker tracking is far from being a solved problem. In this paper we propose a probabilistic generative model that tracks multiple speakers by jointly exploiting auditory and visual features in their own representation spaces. Importantly, the method is robust to missing data and is therefore able to track even when observations from one of the modalities are absent. Quantitative and qualitative results on the AVDIAR dataset are reported.

Fichier principal

ICCVW_submission.pdf (5.57 Mo)

Télécharger le fichier

ICCVW_submission.pdf (5.57 Mo)

Télécharger le fichier

0504_1000.png (467.97 Ko)

Télécharger le fichier

0504_1000.png (467.97 Ko)

Télécharger le fichier

0504_1000.jpg (38.89 Ko)

Télécharger le fichier

0504_1000.jpg (38.89 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Origine : Fichiers produits par l'(les) auteur(s)

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Origine : Fichiers produits par l'(les) auteur(s)

Origine : Fichiers produits par l'(les) auteur(s)

Loading...