A Real-Time System for Full Body Interaction with Virtual Worlds

Résumé

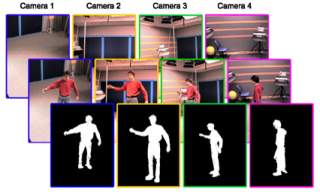

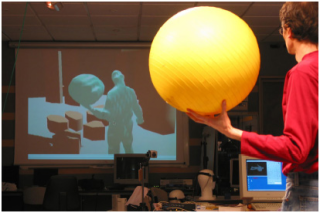

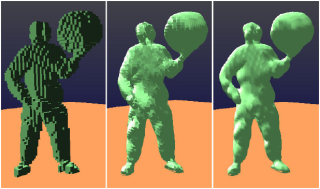

Real-time video acquisition is becoming a reality with the most recent camera technology. Three-dimensional models can be reconstructed from multiple views using visual hull carving techniques. However the combination of these approaches to obtain a moving 3D model from simultaneous video captures remains a technological challenge. In this paper we demonstrate a complete system architecture allowing the real-time (≥ 30 fps) acquisition and full-body reconstruction of one or several actors, which can then be integrated in a virtual environment. A volume of approximately 2m3 is observed with (at least) four video cameras and the video fluxes are processed to obtain a volumetric model of the moving actors. The reconstruction process uses a mixture of pipelined and parallel processing, using N individual PCs for N cameras and a central computer for integration, reconstruction and display. A surface description is obtained using a marching cubes algorithm. We discuss the overall architecture choices, with particular emphasis on the real-time constraint and latency issues, and demonstrate that a software synchronization of the video fluxes is both sufficient and efficient. The ability to reconstruct a full-body model of the actors and any additional props or elements opens the way for very natural interaction techniques using the entire body and real elements manipulated by the user, whose avatar is immersed in a virtual world.

Fichier principal

final2.pdf (2.11 Mo)

Télécharger le fichier

final2.pdf (2.11 Mo)

Télécharger le fichier

bg_subtraction.jpg (70.23 Ko)

Télécharger le fichier

bg_subtraction.jpg (70.23 Ko)

Télécharger le fichier

00interaction_single.jpg (62.25 Ko)

Télécharger le fichier

00interaction_single.jpg (62.25 Ko)

Télécharger le fichier

0interaction.jpg (78.67 Ko)

Télécharger le fichier

Paper35-QuickTime-mp4-Video1.mp4 (7.57 Mo)

Télécharger le fichier

Paper35-QuickTime-mp4-Video2.mp4 (8.71 Mo)

Télécharger le fichier

0interaction.jpg (78.67 Ko)

Télécharger le fichier

Paper35-QuickTime-mp4-Video1.mp4 (7.57 Mo)

Télécharger le fichier

Paper35-QuickTime-mp4-Video2.mp4 (8.71 Mo)

Télécharger le fichier

actor_and_its_avatar.jpg (61.13 Ko)

Télécharger le fichier

actor_and_its_avatar.jpg (61.13 Ko)

Télécharger le fichier

avatar_different_aspects.jpg (96.73 Ko)

Télécharger le fichier

avatar_different_aspects.jpg (96.73 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Format : Figure, Image

Format : Figure, Image

Format : Autre

Format : Autre

Format : Figure, Image

Format : Figure, Image

Loading...