Interactive GigaVoxels

Résumé

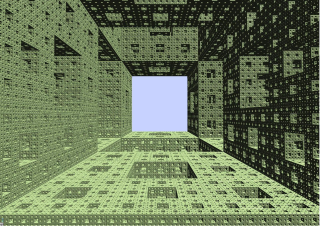

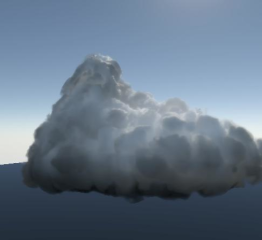

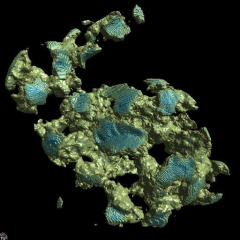

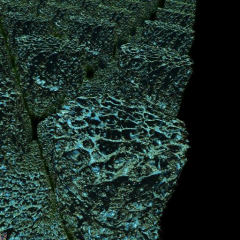

We propose a new approach for the interactive rendering of large highly detailed scenes. It is based on a new representation and algorithm for large and detailed volume data, especially well suited to cases where detail is concentrated at the interface between free space and clusters of density. This is for instance the case with cloudy sky, landscape, as well as data currently represented as relief maps, hypertextures or volumetric textures. Existing approaches do not efficiently store, manage and render such data, especially at high resolution and over large extents. Our method is based on a dynamic generalized octree with MIP-mapped 3D texture bricks in its leaves. Data is stored only for visible regions at the current viewpoint, at the appropriate resolution. Since our target scenes contain many sparse opaque clusters, this maintains low memory and bandwidth consumption during exploration. Ray-marching allows to quickly stops when reaching opaque regions. Also, we efficiently skip areas of constant density. A key originality of our algorithm is that it directly relies on the ray-marcher to detect missing data. The march along every ray in every pixel may be interrupted while data is generated or loaded. It hence achieves interactive performance on very large volume data sets. Both our data structure and algorithm are well-fitted to modern GPUs. We demonstrate our approach with several typical situations: exploration of a 3D scan (8192^3 resolution amplified to $65536^3), of hypertextured meshes (16384^3 virtual resolution), and of a Sierpinski sponge (8.4M^3 virtual resolution), all rendered at an interactive frame-rate of 10 to 20 fps and fitting the limited GPU memory budget.

Fichier principal

rap-rech2-num.pdf (2.78 Mo)

Télécharger le fichier

rap-rech2-num.pdf (2.78 Mo)

Télécharger le fichier

0RayMarchingSierpin.jpg (624.19 Ko)

Télécharger le fichier

BONE1.avi (21.59 Mo)

Télécharger le fichier

BUNNY_ROCK.avi (23.76 Mo)

Télécharger le fichier

DigiLezard1_Xvid2.avi (31.77 Mo)

Télécharger le fichier

Fractal2.avi (55.69 Mo)

Télécharger le fichier

PresGigaVoxels.ppt (6.96 Mo)

Télécharger le fichier

Sierpinski5.avi (18.26 Mo)

Télécharger le fichier

0RayMarchingSierpin.jpg (624.19 Ko)

Télécharger le fichier

BONE1.avi (21.59 Mo)

Télécharger le fichier

BUNNY_ROCK.avi (23.76 Mo)

Télécharger le fichier

DigiLezard1_Xvid2.avi (31.77 Mo)

Télécharger le fichier

Fractal2.avi (55.69 Mo)

Télécharger le fichier

PresGigaVoxels.ppt (6.96 Mo)

Télécharger le fichier

Sierpinski5.avi (18.26 Mo)

Télécharger le fichier

cloud.jpg (10.27 Ko)

Télécharger le fichier

cloud.jpg (10.27 Ko)

Télécharger le fichier

resultBunnyTrou.jpg (44.4 Ko)

Télécharger le fichier

resultBunnyTrou.jpg (44.4 Ko)

Télécharger le fichier

teaserBone.jpg (79.66 Ko)

Télécharger le fichier

teaserBone.jpg (79.66 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Format : Vidéo

Format : Vidéo

Format : Vidéo

Format : Vidéo

Format : Autre

Format : Vidéo

Format : Figure, Image

Format : Figure, Image

Format : Figure, Image

Loading...