INRIA-LEARs participation to ImageCLEF 2009

Résumé

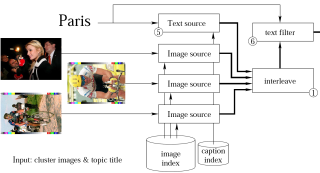

We participated in the Photo Annotation and Photo Retrieval tasks of ImageCLEF 2009. For the Photo Annotation task we compared TagProp, SVMs, and logistic discriminant (LD) models. TagProp is a nearest-neighbor based system that learns a distance measure between images to define the neighbors. In the second system a separate SVM is trained for each annotation word. The third system treats mutually exclusive terms more naturally by assigning a probabilities to the mutually exclusive terms that sum up to one. The experiments show that (i) both TagProp and SVMs benefit from a distance combination learned with TagProp, (ii) the TagProp system, which has very few trainable parameters, performs somewhat worse than SVM in terms of EEC and AUC but better than the SVM runs in terms of the hierarchical image annotation score (HS), and (iii) LD is best in terms of HS and close to the SVM run in terms of EEC and AUC. In our experiments for the Photo Retrieval task we compare a system using only visual search, with systems that include a simple form of text matching, and/or duplicate removal to increase the diversity in the search results. For the visual search we use our image matching system that is efficient and yields state-of-the-art image retrieval results. From the evaluation of the results we find that the adding some form of text matching is crucial for retrieval, and that (unexpectedly) the duplicate removal step did not improve results.

Domaines

Apprentissage [cs.LG]

Fichier principal

verbeek09clef.pdf (251.37 Ko)

Télécharger le fichier

verbeek09clef.pdf (251.37 Ko)

Télécharger le fichier

DGMSV.png (440.14 Ko)

Télécharger le fichier

DGMSV.png (440.14 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Loading...