Category level object segmentation by combining bag-of-words models with Dirichlet processes and random fields

Résumé

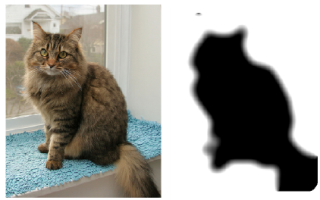

This paper addresses the problem of accurately segmenting instances of object classes in images without any human interaction. Our model combines a bag-of-words recognition component with spatial regularization based on a random field and a Dirichlet process mixture. Bag-ofwords models successfully predict the presence of an object within an image; however, they can not accurately locate object boundaries. Random Fields take into account the spatial layout of images and provide local spatial regularization. Yet, as they use local coupling between image labels, they fail to capture larger scale structures needed for object recognition. These components are combined with a Dirichlet process mixture. It models images as a composition of regions, each representing a single object instance. Gibbs sampling is used for parameter estimations and object segmentation. Our model successfully segments object category instances, despite cluttered backgrounds and large variations in appearance and viewpoints. The strengths and limitations of our model are shown through extensive experimental evaluations. First, we evaluate the result of two methods to build visual vocabularies. Second, we show how to combine strong labeling (segmented images) with weak labeling (images annotated with bounding boxes), in order to limit the labeling effort needed to learn the model. Third, we study the effect of different initializations. We present results on four image databases, including the challenging PASCAL VOC 2007 data set on which we obtain state-of-the art results.

Domaines

Apprentissage [cs.LG]

Fichier principal

segmentation.pdf (2.12 Mo)

Télécharger le fichier

segmentation.pdf (2.12 Mo)

Télécharger le fichier

LVJ.png (112.14 Ko)

Télécharger le fichier

LVJ.png (112.14 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Format : Figure, Image

Loading...